Text Analysis of Martha Ballard’s Diary (Part 1)

"mr Ballard left home bound for Oxford. I had been Sick with the Collic. mrs Savage went home. mrs foster Came at Evening. it snowd a little."

This is the first entry in the diary of Martha Ballard. Martha Ballard was a rural Maine midwife who kept an extensive diary between 1785 and 1812 and whose life was immortalized in 1990 by the historian Laurel Thatcher Ulrich’s award-winning A Midwife’s Tale. Over the course of three decades, Ballard kept a meticulous, near-daily accounting of her life spanning over 10,000 entries.

When reading A Midwife’s Tale, I was struck by how readily the text would seem to lend itself to digital analysis. In an interview, Ulrich noted, “The very thing that had attracted me to the diary in the first place was also the thing that made it difficult to work with. I mean there’s just so much.” To ground herself, she began by simply counting things: “And I would go day by day for every other year of the diary, and I would tick off what was in each entry: baking or brewing, spinning or washing, or trading, sewing, mending, deliveries, general medical accounts, going to church, visitors, people coming for meals, etc.” Because of the sprawling scope, she took this quantitative approach only for the even-numbered years in the diary. The fact that she was working in the late eighties without a computer makes her work even more impressive.

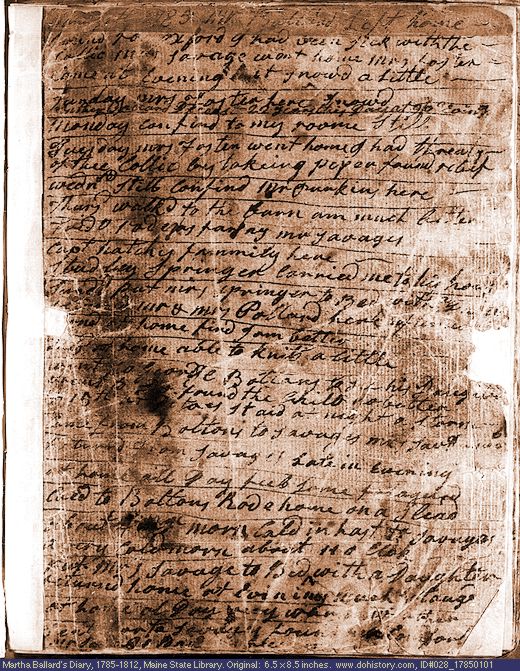

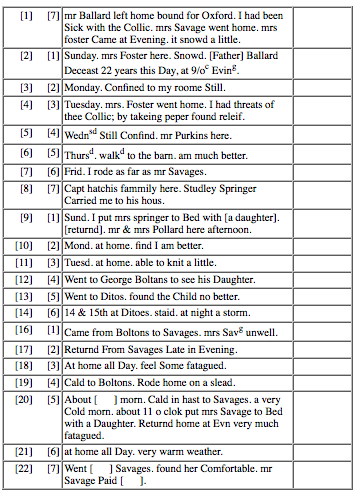

After poking around online I came across DoHistory.org, a website developed and maintained by the Film Study Center at Harvard University and hosted by (who else, really) George Mason's CHNM. The website presents the diary to the public in two formats: the viewer can either browse through photographed pages of the diary or read the transcript of the pages (transcribed through a monumental effort by Robert R. McCausland and Cynthia MacAlman McCausland):

When I realized the entire diary was online, it got me thinking about possibilities for text mining. As an aspiring digital humanist with little “hard” skills beyond basic GIS, I had been meaning to learn how to program for quite some time. In Martha Ballard’s diary, I had an intriguing source of data with which to learn how to do so. Now I just had to learn how to program. With the patient help of several programming-savvy family members, I gradually learned the basics of Python and how to apply it to Martha Ballard’s diary. What follows are the first steps we took to process the diary’s raw data into an accessible digital format.

Process

At first, I briefly considered learning how to scrape the text of the diary off the website. After some investigation, I decided that was a little beyond my abilities, so I copped out to the much easier route of sending an email to Kelly Schrum at CHNM, who kindly forwarded my request to Ammon Shepherd, who emailed me a zip file containing 1,431 html documents, one for each page of the diary. The html files of the transcribed diary are a basic, 3-column table that look this. My first step was to find a way to strip out the html tags and organize the text into a systematic database of individual entries. Fortunately, Ballard’s meticulousness and consistency lent itself well to such an approach.

The diary’s format translates quite nicely into creating a list of lists - the “main” diary being a list of all the entries, and each entry being a list in and of itself. The first program we wrote was to open each html file and begin extracting the different sections of text (which were conveniently marked by html tags). Iterating through each entry allowed us to separate the different columns in her diary into different items in the list. Here is the breakdown of our “list of lists”:

- Diary

- Entry

- Date

- Month

- Day

- Year

- Day of the Week

- Main Text of Entry

- Day Summaries (Column 3 of actual diary entry)

- Birth(s) (Recorded in Column 1 of actual diary entry)

- Date

- Entry

In creating the list, we had to separate out the raw data from the html tags that formatted it. Fortunately, the folks who built the html files originally used an extremely systematic formatting process that actually made the job of distilling one from the other quite straightforward. A Python module called Pickle allowed us to export the list of entries as a manageable single file that we could then easily import into future programs to manipulate.

For example, the third entry in the diary would translate a bit into something like this:

- Diary

- Entry (3)

- Date

- 1 (January)

- 3

- 1785

- Date

- 3 (Tuesday - Ballard numbered the weekdays, beginning with Sunday as 1)

- "Tuesday. mrs. Foster went home. I had threats of thee Collic; by takein peper found releif."

- Empty

- Empty

- Entry (3)

The list allows us to access pieces of information by “calling” their position. It helped me to think of the entire diary list as a warehouse containing almost 10,000 boxes (entries) inside it, with each box containing five compartments, with the first of those compartments divided into three sub-compartments. If you were to open any of the boxes (entries) and look inside the first compartment, then inside sub-compartment number two, you would always find a number that represented the month of that particular entry. If you were to look inside the third compartment of the entry/box, you would always find the main text for that day’s entry.

The advantages of setting up the data in a list structure is the ability to access these specific pieces of information easily and to compare them across entries. In many ways, processing the text to make it readable and programmable is one of the biggest challenges to text mining. Deciding on the most logical way to organize and break down over 1,400 files will lay the groundwork for the fun part: writing programs to actually analyze the diary of Martha Ballard.

***Special-edition sneak preview of future posts in this series***

A simple counting program reveals that the main text of Martha Ballard's diary alone contains 377,315 words, spanning I-couldn't-make-this-number-up 9,999 entries. That is a lot of data to play with.