Professor vs. ChatGPT: The Grading Showdown

This summer, I’m diving into how teachers can use Generative AI. My first foray began with a simple premise: let’s use AI to automate the boring stuff. When I thought about the parts of my job that are both tedious and time-intensive, I immediately thought of grading papers. Before tackling the thorny question of whether we should use Generative AI to grade student papers, I wanted to find out if Generative AI could grade them. To be a bit more specific:

- Can Generative AI accurately grade student writing? In other words, can it reliably sort out the A’s from the B’s from the C’s?

- Can Generative AI give detailed, high-quality feedback on student writing?

To find out, I looked on OpenAI’s directory of Custom GPT’s and chose one called Essay Grader Professor, developed by Stephanie Shasteen and described as “an academic GPT specializing in essay grading and tailored feedback.” Let’s put it to the test!

🧪 Testing It Out 🧪

What kind of measurements should we use to evaluate a Custom GPT like Essay Grader Professor? I figured the best way to test it out would be to pit its grading abilities against my own.

In my Fall 2019 semester of History of the Western U.S. I had my students write a Pop Culture Review in which they had to review a piece of pop culture loosely within the “Western” genre, summarizing it and evaluating its historical context in 4-5 pages.

I then uploaded the rubric I had used to grade the essays across five categories (Writing Mechanics, Analysis, Evidence, Structure, and Historical Perspective) and wrote the following opening prompt:

Me:

Please read the assignment description below. I am also attaching the writing rubric to use. The students are not required to use a particular citation style and do not deduct points for citation formatting (but you can evaluate their sources and how they use them). Focus more on their writing mechanics and argumentation, and less on their research/sources.

Essay Grader Professor GPT:

Great! I have reviewed the assignment description and the grading rubric. The rubric evaluates essays based on Writing Mechanics, Analysis, Evidence, Structure, and Historical Perspective. Now, please upload the first essay for evaluation. I will use the provided rubric to assess the essay and provide detailed feedback.

📝 How Did It Do? 📝

Can Generative AI accurately grade student writing?

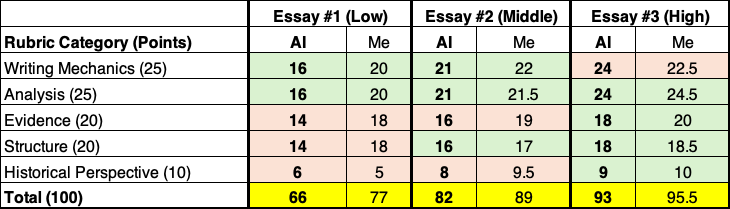

Here’s a snapshot of the Custom GPT’s essay scores across five rubric categories compared to my own. Scores in green fell within the same range for the given rubric category - ex. under Writing Mechanics the Custom GPT might have given a 16 and gave a 20, but we were both within the same range of 16-21 points: writing lacks clarity and contains many errors and/or deficiencies in grammar, syntax, or phrasing). Scores in red fell within a different range on the rubric.

How should we interpret these scores? Let’s start with a thought experiment: what if I wasn’t comparing my grades to a Custom GPT, but rather to another professor grading the same essay? I’m confident we would still rank the essays in the same order (Low, Middle, and High), but I suspect that our rubric scores would have quite a bit of variance.

This is exactly what I found with the Custom GPT. It was able to accurately grade the essays using the rubric I provided and ranked the essays in same order as I did. Although it assigned lower scores across the board, none of them struck me as unreasonable. If anything, the results highlighted my own tendency to fudge student scores upwards a bit. If I had to choose which overall grade was more “accurate” according to the strict letter of the rubric, I’d give the nod to the Custom GPT (ie. Essay #1 was probably closer to the Custom GPT’s D grade than my own C+ grade).

Can Generative AI give detailed, high-quality feedback on student writing?

In short, yes. The Custom GPT’s feedback was extremely detailed, writing short paragraphs for each of the five categories that included specific examples from the essay along with a summary paragraph at the end (around 500-600 words of total feedback for a 1,500-word assignment). Based on conversations with students over the years, this is a lot more than they receive from most of their professors. For instance, here’s its feedback on Writing Mechanics for Essay #2:

Writing Mechanics (25 pts)

Score: 21/25

The essay is generally clear, but there are some grammatical errors and awkward phrasings. For example, “The movie was written and directed by Michael Crichton a Science Fiction heavyweight who brought us classics such as The Great Train Robbery Jurassic Park and Westworld,” lacks necessary commas for clarity. Another instance is, “The parallels between the guests’ attitude and that of settlers during the 1870s can be seen: treating everything as something they had a right to,” where the colon usage is incorrect and affects readability.

But quantity doesn’t mean quality. How good was this feedback? Here we get more of a mixed bag. With a few exceptions, I thought the Custom GPT did well on the Writing Mechanics, Analysis, and Structure categories. Compare, for instance, our respective feedback on the Structure category for Essay #3:

Custom GPT: “Some paragraphs could be broken down to enhance readability, such as the long paragraph detailing the pole’s origin story and legal aftermath.”

Me, in the margins of the same paragraph: “This is all good, but needs to be condensed down. You can tell the same story without getting bogged down in quite so many details.”

The Historical Perspective category was where the Custom GPT lagged behind. Essay #1 had several big historical inaccuracies that I used as a teachable moment to reinforce some of the course’s big themes that the student seemed to be struggling with. The Custom GPT missed these entirely. In Essays #2 and #3, meanwhile, the Custom GPT said they could have “delved deeper” despite both essays excelling in this category.

At my best, I still think I write higher-quality feedback than the Custom GPT - especially in the context of a history course. But I’m not always at my best. When I’m exhausted and in the depths of grading purgatory, my feedback is probably less concrete, actionable, or consistent from essay to essay. When judged against this bar, I think the Custom GPT did quite well.

Full Feedback

I’m including the full feedback generated by the Custom GPT for each essay - I’d encourage you to take a look for yourself to gauge its quality.

Click here to read feedback for Essay #1 (Low Score)

Writing Mechanics (25 pts)

• Score: 16/25

• The essay has numerous grammatical and syntactical errors that affect the clarity and flow of the writing. For instance, the sentence “The handwritten orders from the Commander-in Chief placed even more tensions on previous strained relationships between the white soldiers Apache and Comanche Indians” is a run-on and confusing. Additionally, the phrase “Captain Blocker pretends to wave goodbye and exit the train platform but at the last-minute hops on the very back of the train” is awkwardly constructed and needs better punctuation.

Analysis (25 pts)

• Score: 16/25

• The analysis is basic and often descriptive rather than analytical. For example, the essay states, “The unique journey sets the plot for the whole film traveling from New Mexico to Montana as one cohort,” which describes the plot but does not delve into its significance in the context of Western history. Another instance is, “The theme of trust was non-existent in the beginning but substantially displayed in the end,” which mentions a theme but lacks a deeper exploration of how this theme is developed and its implications.

Evidence (20 pts)

• Score: 14/20

• The essay uses evidence from the film to support its analysis, but it does so sporadically. For example, the essay references, “The film provides the background knowledge needed to better understand the time period this film was capturing,” without providing specific scenes or elements from the film to illustrate this point. Additionally, the statement, “Blocker despised Chief Yellow Hawk and everything that he and is race stood for,” needs more context and direct examples from the film to strengthen the analysis.

Structure (20 pts)

• Score: 14/20

• The structure of the essay is somewhat confusing and difficult to follow. For example, the paragraph that begins with, “Hostiles was released on December 22nd 2017 in the United States,” abruptly shifts focus without a clear transition from the previous discussion about the film’s plot. Another example is the ending paragraph, which mixes plot summary with analysis in a disjointed manner, affecting the overall coherence of the essay.

Historical Perspective (10 pts)

• Score: 6/10

• The essay provides limited historical context and contains some inaccuracies. For instance, the statement, “President Benjamin Harrison personally intervened in this request by Chief Yellow Hawk and ordered white U.S. soldiers to escort the family home,” appears to fictionalize historical events without clarifying the film’s artistic liberties. Additionally, the essay mentions, “The Apaches had no permanent home which is why in this film Chief Yellow Hawk chose their ancestral land of Montana,” but does not delve into the historical accuracy or implications of this portrayal.

Overall Comments

The essay on “Hostiles” offers a basic review of the film but falls short in several key areas. The writing mechanics need significant improvement to enhance readability and clarity. The analysis lacks depth and critical insight, often focusing more on describing the plot than on evaluating the film’s portrayal of the Western United States and its history. The use of evidence is limited, and the essay could benefit from incorporating external sources to support its points. The structure is somewhat disorganized, making it challenging to follow the author’s argument. Lastly, the historical perspective is underdeveloped and contains some inaccuracies.

Final Grade: 66/100

Click here to read feedback for Essay #2 (Middle Score)

Writing Mechanics (25 pts)

• Score: 21/25

• The essay is generally clear, but there are some grammatical errors and awkward phrasings. For example, “The movie was written and directed by Michael Crichton a Science Fiction heavyweight who brought us classics such as The Great Train Robbery Jurassic Park and Westworld,” lacks necessary commas for clarity. Another instance is, “The parallels between the guests’ attitude and that of settlers during the 1870s can be seen: treating everything as something they had a right to,” where the colon usage is incorrect and affects readability.

Analysis (25 pts)

• Score: 21/25

• The essay makes solid analytical points that are clearly conveyed. For instance, the comparison of guests in Westworld treating hosts as disposable to settlers treating Native Americans similarly is a strong analytical point: “I thought this was very similar to how one could say that those who ‘settled’ the West treated the natives: with a complete disregard for their humanity and thinking of them as a ‘fascinating but savage peoples’.” Additionally, the exploration of how the show critiques Hollywood’s portrayal of the West is insightful: “It can also be interpreted as a critique of what the media and Hollywood has done to the image of the West we currently have since the writers of the park base their stories on what people think of the West.”

Evidence (20 pts)

• Score: 16/20

• The essay uses appropriate evidence to support its analysis and cites all sources. For example, it references characters and plot points from the show to illustrate themes: “Akecheta is shown to be one of the first hosts to be fully conscious… and is one of the first to realize the horrors and suffering the hosts go through daily as they see their family members die over and over again.” However, the citations from external sources are minimal and could be integrated more effectively into the analysis.

Structure (20 pts)

• Score: 16/20

• The essay has a clear structure, but some transitions between paragraphs could be smoother. For example, the shift from discussing the show’s portrayal of the West to its treatment of characters like Maeve Millay could be more seamless. Additionally, some paragraphs are quite lengthy and could benefit from being broken down into smaller, more focused sections for better readability.

Historical Perspective (10 pts)

• Score: 8/10

• The essay accurately contextualizes its analysis within a historical framework. For instance, it acknowledges the anachronistic portrayal of a character like Maeve: “One could question the accuracy of that occurring in the West due to the fact that it would have been very difficult for that to occur in real life at the time since many people of color were restricted from getting bank loans or being able to open businesses.” However, it could delve deeper into the specific historical inaccuracies or liberties taken by the show.

Overall Comments

The essay on “Westworld” offers a thoughtful and well-structured review, making solid analytical points about the show’s themes and its portrayal of the Western genre. The writing mechanics are generally good, though there are some grammatical errors and awkward phrasings that could be improved. The analysis is strong, with insightful comparisons and critiques, though the use of external sources could be more robust. The structure is clear, but some transitions and paragraph breaks could enhance readability. The historical perspective is well integrated but could be expanded for greater depth.

Final Grade: 82/100

Click here to read feedback for Essay #3 (High Score)

Writing Mechanics (25 pts)

• Score: 24/25

• Your essay is well-crafted and largely free of grammatical errors. The writing is clear and sophisticated, with only minor issues. For example, “Though these objects memorialize the past through the recognition they receive and the debate they spark they also tell us something about the present” could benefit from a comma after “spark” for better readability.

Analysis (25 pts)

• Score: 24/25

• You make solid analytical points that are clearly conveyed to the reader. For instance, your analysis of the Pioneer Square Totem Pole’s history and its implications is insightful: “In the mythmaking of Seattle as a progressive bastion the story of the Pioneer Square Totem Pole has been ignored.” Another strong point is your discussion of “compassionate racism” as described by Colleen Echohawk: “While the city aimed to establish public art and recognize Native cultures the theft cultural appropriation and misrepresentation caused more harm than good.”

Evidence (20 pts)

• Score: 18/20

• You use a variety of carefully selected evidence to support your analysis and cite all sources accurately. For example, you detail the historical context and actions surrounding the theft of the totem pole: “In September 1899 the voyagers stopped to visit Fort Tongass… When the Tlingit residents returned to discover that their totem pole was gone the owner contacted the Alaskan governor and filed a lawsuit for the pole plus $20000 in damages.” The use of external sources, such as articles and historical records, strengthens your argument. However, integrating direct quotes from these sources could enhance your points further.

Structure (20 pts)

• Score: 18/20

• Your essay has a clear and effective organizational structure. The narrative builds into a powerful and coherent whole, with elegant transitions between points. For example, the transition from discussing the pole’s history to its current symbolic meaning is seamless. However, some paragraphs could be broken down to enhance readability, such as the long paragraph detailing the pole’s origin story and legal aftermath.

Historical Perspective (10 pts)

• Score: 9/10

• You show a sophisticated ability to weave in helpful historical context and situate your writing within a larger scholarly conversation. For example, your discussion of the pole’s theft and subsequent legal battle provides a nuanced understanding of historical injustices: “The Tlingit residents returned to discover that their totem pole was gone… the tribe ended up settling for $500.” One area for improvement could be a deeper exploration of the broader implications of the totem pole’s theft on Tlingit culture and its long-term effects on the community. This would provide a more comprehensive historical perspective.

Overall Comments

Your essay on the Pioneer Square Totem Pole is well-written and insightful, making strong analytical points about the monument’s history and its implications. The writing mechanics are excellent, with minor improvements needed for even greater clarity. Your analysis is sophisticated, supported by a variety of evidence, though integrating direct quotes from your sources could strengthen it further. The structure is coherent and effective, though some paragraphs could be broken down for better readability. You provide a nuanced historical perspective, and with a bit more depth on the broader cultural implications, it would be even more compelling.

Final Grade: 93/100

💭 Big Takeaways 💭

Overall, the Custom GPT’s performance far exceeded my expectations. In 2023, I tested out the free version of ChatGPT (GPT-3.5) to critique a piece of sample writing. It was borderline useless: hallucinating entire passages, misidentifying sections, etc. One year later, a Custom GPT powered by GPT-4 was able to follow the rubric, assign accurate scores for each category, identify strengths and weaknesses, and even pull out direct quotes from the essays to use as examples. Although it missed some of the subtleties and specifics of the assignment, if you told me a teacher wrote this feedback I wouldn’t bat an eye. If anything, I’d compliment their thoroughness and attention to detail.

Even so, I’d want to tinker with the Custom GPT before I would consider using it in an actual course. This might involve writing more detailed prompts, providing examples of my own feedback to use as a model, or building my own version from scratch that was explicitly geared towards a specific course or assignment.

As tends to happen with Generative AI, this entire experience brought up bigger issues than the narrow technical capabilities of a Custom GPT.

Academic Labor

There is no question that a Custom GPT can “automate the boring” when it comes to grading. It takes me about 15-20 minutes to grade one student essay (leaving comments in the margins, assigning rubric scores, and writing a two-paragraph summary of my feedback). Using a Custom GPT could cut this down to 2-3 minutes per essay (stripping out identifying information, double-checking its output, etc.). With 20 students in a class, that would save me something like 5-6 hours of tedious work. Multiply this across several assignments per semester, and it quickly adds up.

In an ideal world, this kind of tool would free up teachers to spend their time on more meaningful pedagogical work. But we don’t live in an ideal world. Instead, I worry that widespread adoption would only accelerate the devaluing of academic labor. Administrators could easily use it as justification to hire fewer instructors while loading up existing ones with more classes, larger sections, and fewer teaching assistants.

Grades

Watching a Custom GPT capably mimic the grading process has only reinforced how hollow grades can actually be. I like to think that all the hours and thought I put into my feedback is actually helping students learn; that they reflect on my comments and incorporate suggestions into future assignments. And maybe they do. Most of the time, however, I suspect that they anxiously skip to the overall grade, skim through a couple comments, and that’s about it.

Grades tend to incentivize students in all the wrong ways. The goal for most students on any given assignment is not to learn new things or develop new skills; it’s to get an A (or, rather, to NOT get a B, or a C, or a D). This means that they shy away from taking intellectual risks, experimenting with unfamiliar approaches, or pushing themselves outside their comfort zone. Why risk it?

All of this leads to a dynamic that feels transactional and punitive; “You did X, Y, and Z, here is your reward. You didn’t X, Y, and Z, here is your punishment.” Guess what? This dynamic will only get worse if students know that a chatbot, rather than a human, is grading their work.

Human Connection

Consider the dystopian and entirely plausible scenario where a student uses ChatGPT to write their essay and a professor uses a Custom GPT to grade it - a (non-)human centipede of utterly meaningless writing and feedback. To demonstrate, I asked ChatGPT (GPT-4) to write an essay based on the above assignment, which I then uploaded to the Essay Grader Professor GPT. It lauded the essay as “well-written and insightful,” giving it an A grade. 🤮

If this scenario makes your skin crawl, you can understand my unease about the loss of human connection in teaching and learning. I see this as part of a wider shift towards impersonalized education. Instructors turn into a disembodied series of pre-recorded lecture videos. Classmates become faceless figures on a Canvas discussion board. Teaching gets reduced to a mechanized content delivery system.

Maybe I’m shouting into the void, but I still think that the most effective teaching comes through empathy and personal connection. A history major applying to law school might need different kind of feedback than an engineering student taking the class to fulfill their humanities requirement. A flurry of harsh critiques might motivate one student to put in more effort and cause another student to disengage from the class entirely. A Custom GPT doesn’t understand any of this context, instead reducing the relationship between a teacher and a student into a series of automated transactions devoid of meaningful human engagement.