Bottlenecks, Side Quests, and the Calculus of Historical Research

When I was researching my dissertation, I came across an intriguing snippet in an otherwise boring, 900-page government document: a four-page table showing how long it took mail to travel by railway between roughly 130 US cities and twelve major railroad hubs in 1882. This set my wheels spinning: what if I could use this to create a nationwide map of how fast information traveled between different parts of the country and visualize how those connections changed over time?

Then I sat down to actually do this and realized that it would be a giant pain in the ass. I knew this little side quest was technically possible; I just wasn’t sure the analytical payoff would be worth the investment of time and skill to actually execute it. So I abandoned my vision. I downloaded a few PDFs of these transit tables into a folder on my computer, where they sat for the next fourteen years.

Every historian has these kinds of orphaned sources and abandoned side quests. Maybe it’s a collection of petitions you never had time to transcribe or a city business directory that you never got around to organizing into a spreadsheet. They often get left behind because of a basic calculus: is the juice worth the squeeze? Will an uncertain analytical payoff be worth the hours it would take you to transcribe the source or the money it would take to pay a research assistant to do it for you? Even if you did manage to get that directory into a spreadsheet, what then? Maybe you’d want to look for spatial patterns by overlaying all those businesses onto a historical map of the city. But that would mean learning how to use ArcGIS or finding a way to hire or work with someone who already does – all with no guarantee that you’ll find anything of interest. So you move on.

These two bottlenecks – the time and cost of transcribing sources and the technical skill to analyze or visualize them – have traditionally closed off many avenues of historical research before they ever began. In 2026, that’s changed. Projects that would have once required hundreds of hours and some combination of technical training, research funds, collaborators, and research assistants are now within reach for an individual researcher with minimal funding and little to no technical training - and they can be done in a fraction of the time. The reason for this shift? An uptick in the “reasoning” capacities of Large Language Models (LLMs) and a new generation of AI coding agents.

To show what I mean, let’s return to my abandoned mail transit tables that had been sitting on my laptop for the past fourteen years. I recently pulled them up to see if generative AI could help me finally complete my side quest. Over the span of about a month, spread across a half-dozen 1-2 hour sessions, I used Claude Code and Gemini to go from a folder of PDFs all the way to a fully working, interactive web visualization: “How Fast Was the Mail?”

The two bottlenecks that had caused me to abandon this source all those years were no longer major barriers: I didn’t transcribe any data by hand or write a single line of code myself during this process. In the rest of this post, I want to walk through how generative AI tools handled these bottlenecks and some of the larger implications for historical research.

The Transcription Bottleneck

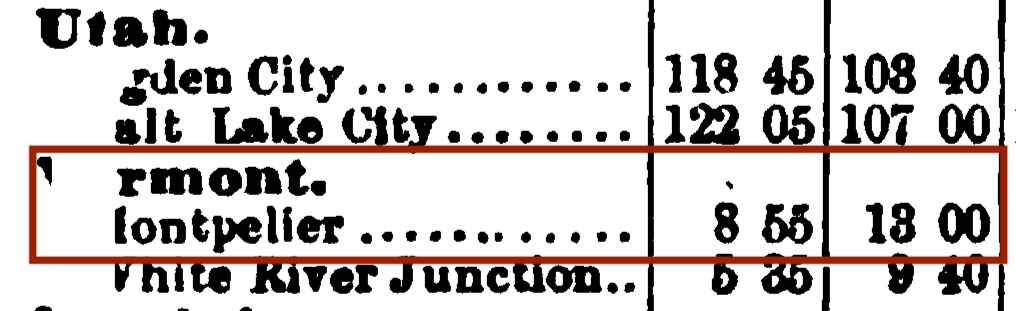

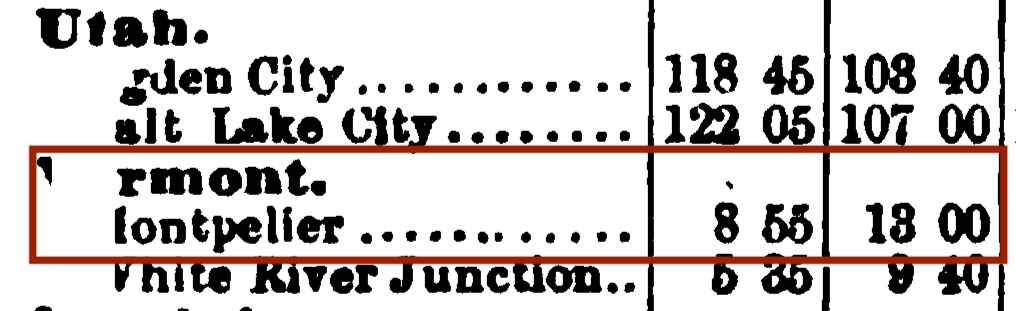

The first step in most digital projects is to take a historical source and turn it into machine-readable data. Traditionally this has required either transcribing it by hand or using Optical Character Recognition (OCR) tools to try to transcribe it automatically. Depending on the source, OCR can be quite challenging. Take my mail transit tables. Beyond the spotty typeface (missing or smudged characters, a 3 that looks like an 8, etc.), these kind of tables present particular difficulties: mess up the alignment for one row or column and that mistake can cascade across the entire document. Traditional OCR tools like ABBY FineReader or Tesseract might see the following:

…and understandably transcribe it as:

rmont.

lontpeller ....•....... 8 55 18 00 Even putting aside these inaccuracies, I didn’t actually want a full character-by-character transcription of the entire PDF. What I wanted was to extract and reformat the underlying data from this source into a usable spreadsheet. This required a certain level analytical reasoning – structuring columns and rows, deciding how to record the time values (hours? minutes?), handling missing values, etc.

This is where Vision Language Models (VLMs) come into play. Traditional OCR tools work through pattern recognition: detect where text appears on a page and then identify individual characters and words. VLMs take a different approach. Rather than processing characters and words in isolation, they integrate visual perception alongside a sophisticated understanding of language and the relationships between words. This allows VLMs to recognize that the above source is a table of cities organized alphabetically by state, that lontpeller falls under the Vermont heading, and that the intended city is therefore likely to be Montpelier.

At least, that’s the idea. When I first pointed Claude Code to my folder of PDFs of transit tables and told it to extract the data from them, it failed miserably. Rather than using a VLM approach, it kept trying to use Tesseract to perform traditional OCR on the documents. So I pivoted to a different generative AI tool: Google’s Gemini 3.1 Pro. After some adjustments to my prompts, I was able to upload a four-page PDF of a single transit table and get back a fully usable, accurately transcribed dataset. Not only did Gemini handle things like missing or smudged letters, it was able to recognize irregularities, maintain table alignment, and reformat everything into a single usable spreadsheet for each PDF – all in just a few minutes.

A sample from Gemini’s “model thoughts” shows the difference between traditional OCR vs. a VLM approach to transcription: I'm now focusing on formatting the data into the "long" format for the CSV, refining the output structure. I will rigorously check OCR outputs, specifically converting "H. M." values to decimal hours, and correcting any potential errors, such as "Little Kock" to "Little Rock." This isn’t pattern matching; this is using reasoning to translate a historical source into usable data – much like a human researcher would do.

In short, Gemini was able to turn this:

…into this:

| year | origin_city | origin_state | destination_city | destination_state | hours |

|---|---|---|---|---|---|

| 1882 | Montpelier | Vermont | Boston | Massachusetts | 8.9167 |

| 1882 | Montpelier | Vermont | New York | New York | 13 |

The technical capacity for accurately transcribing messy historical sources is there, while the usability of these tools is still catching up. My experience with Claude Code – which defaulted to traditional OCR rather than using a VLM approach – shows how uneven the landscape still is. But given the pace of change, it feels like a gap that’s going to close very quickly (if it hasn’t already).

The Coding Bottleneck

The second bottleneck comes down to the technical skill of coding. Any historian can transcribe a transit table into a spreadsheet; the bottleneck is time and labor, not skill. Building a fully interactive, map-based web visualization that responds to user input and presents temporal shifts in spatial data? Vanishingly few historians have the skill and training to do this. If you are not one of those unicorn historians who knows how to define a CSS hover effect or implement a JavaScript event listener, then up until a few months ago your options were limited. You could find a technical collaborator, get funding to hire a developer, or accept the constraints and limitations of out-of-the-box software like Tableau or ArcGIS StoryMaps.

This changed in late 2025, when Anthropic released a major new update for the model behind its agentic coding system, Claude Code. Prior to this, vibe coding (ie. describing what you want in non-technical language and having an LLM write the code for you) necessitated at least some technical fluency, like knowing how to run Python in the command line. Today, that barrier has all but vanished. Someone with zero coding experience can install Claude or OpenAI’s Codex, write a few prompts, and get back a fully functioning website, visualization, or app a few minutes later.

When I sat down to test out Claude Code with my mail transit tables, I decided I was not going to write a single line of code myself. I wasn’t going to review code and approve it, I wasn’t going to tweak variables until something worked, I wasn’t going to lean on prior experience to debug or catch errors. Instead, I channeled a colleague with zero coding experience. I just described what I wanted, in plain language, and let Claude Code do its thing. This was my very first prompt:

There are two files in this folder: a PDF of mail transit times between major cities in 1882 and the transcribed data from that PDF. I want to use the transcribed data to build some kind of interactive app where a user can quickly see and visualize the data.

After some further back-and-forth to help clarify what I wanted, Claude Code worked for about 5-6 minutes. When it finished, I opened the HTML file it had created and immediately said “oh shit.” Here was a fully working and interactive prototype of that original vision I had fourteen years ago when I first stumbled onto this source:

Watching Claude Code build something like this from scratch felt like sorcery. But I would argue that the iterative capacities of agentic coding - the back-and-forth of refining, adjusting, and experimenting that turns a prototype into something you actually want to show people - is even more significant. Iteration has traditionally come with a lot of friction. If you’re writing the code yourself, iteration means trying to change something, breaking your entire project, and spending hours trying to debug it. If you’re working with a web developer, iteration means sending over your proposed changes, waiting for them to implement your request, realizing it made everything worse, and sheepishly asking them to walk it all back.

Vibe coding removes much of the friction of iteration. From my initial prototype, I could easily add additional transit table data from other years, build out more interactive functionality, and make a wide range of design and layout adjustments and tweaks. If something broke, Claude Code was able to fix it. Here is an example instruction I wrote during this process:

When you click or select a hub, have all cities show up in a scrollable table on the right. Add a hover function so that when you hover over a city on the map it subtly highlights it in the table and vice versa. Something is wonky with St. Louis when you click it, it turns grey not blue.

Above all, I was struck by how Claude Code reduced so many of the pain points of coding by hand. To take one example: a few sessions in, I remembered that the economic historian Jeremy Atack had compiled a dataset of historical railroad lines that would be perfect to add to my visualization. If I had been coding this myself, I would have had to wrestle with coordinate systems and map projections – my personal Achilles heel as a spatial historian that would have left me Googling “WGS 1984 shapefile” for the 1,284th time in my career before closing my laptop in frustration. Instead, I just downloaded Atack’s dataset and told Claude Code to add it to the map. And it did, without a single hiccup. As many others have noted: Claude Code take the drudgery of coding and makes it, well, fun.

Is the code behind my visualization especially elegant or well-structured? Probably not! I’m no web developer, but my hunch is that a gargantuan HTML file with 10,600 lines of code is not going to win any awards. But, for my purposes as a researcher, that doesn’t really matter: it just worked.

Side Quests

One obvious effect of the rise of agentic AI and reasoning models is that the field of digital history is now open to a much wider swath of researchers than ever before. A lot of the end products of digital history – online exhibits, project websites, maps, charts, network graphs, etc. – that used to require a certain degree of technical fluency can now be built with Generative AI.

A less obvious effect of these changes is that many more research side quests are now worth pursuing even when you don’t know if they’ll pay off. Side quests are essential to the research process. Even the dead ends are often valuable: a chance for serendipity, learning new skills, reframing research questions, or stumbling into entirely new lines of inquiry. But side quests come with concrete costs. Historians are constantly making judgement calls about which sources to dig into and which to put aside. Ideally, these decisions are driven solely by the research questions themselves; in practice, they involve the kind of calculations I did as a graduate student when I came across that first mail transit table: was the juice worth the squeeze?

And guess what? It wasn’t! As far as I can tell, my snazzy transit visualization shows precisely the kind of unsurprising patterns you’d expect to see: an overall shrinking of informational time-space between 1882 and 1908, lengthy transit lags for the Deep South and patches of the American West, and big leaps in transit gains following the completion of major railroad lines. I could be wrong; maybe something new is hiding in there and some other historian or geographer or communication scholar will find it.

If I had decided to execute my original vision back in 2012, I would have wasted a whole lot of time going down an analytical dead end. At best, I would have lost several weeks that I could have spent on other parts of my dissertation. At worst, I might have fallen prey to the sunk cost fallacy and tried to shoehorn this source into my dissertation just because I had invested so much time working on it.

Fast-forward to 2026. Using Gemini, I could transcribe that same source in a few minutes, at a cost of $0.34.

Breaking some of the traditional bottlenecks to transcription and analysis changes the threshold for whether to pursue a historical research question at all. When the opportunity cost of transcribing a source drops to a few minutes and the cost of a candy bar, the challenge is no longer getting the data – it’s whether the questions you can ask with that data are interesting enough to pursue.

And this is where a different kind of expertise comes into play that has nothing to do with writing code or importing shapefiles: discernment. One feature of LLMs is that they’re eager to give you something. When working with Claude Code, it kept presenting me with what it claimed were interesting patterns in the data. If I were a graduate student, I might have excitedly leapt at these suggestions. But part of becoming an experienced researcher is learning to recognize not just what IS worth digging into but what ISN’T worth pursuing. So when Claude ran a clustering algorithm on cities based on their connectedness and declared “the typology shifts are particularly interesting,” I had the experience to say: “No, they’re not.”

The old bottlenecks were time and technical skill; the new bottlenecks are judgment, discernment, and taste. This is true for many different disciplines, but history seems especially well-positioned to benefit from this shift. Accessing and transcribing archival sources has always been a major sticking point for historical research, and the ongoing decimation of federal grant funding has made that bottleneck even more acute. Vision Language Models don’t just make transcription faster or cheaper - they will (hopefully) expand the kinds of sources we can access and work with.

Meanwhile, coding remains a vanishingly rare skill for historians. For colleagues in the social sciences and hard sciences, agentic coding dramatically speeds up something they could already do. For historians, agentic coding allows us to do things we couldn’t do before. Without training in statistical thinking or computational methods, there’s a risk that we start vibe coding our way to questionable results. But agentic coding in history doesn’t have to mean pumping out a bunch of faulty regression analyses; it can also mean spinning up an interactive web app to explore a set of sources that have been languishing on your computer for the past fourteen years. By breaking the bottlenecks of transcription and coding, these tools free up time and energy for historians to use the skills we actually excel at: close reading, contextual thinking, narrative, and interpretive nuance.